The use of Artificial Intelligence (AI) is becoming increasingly important in the banking sector, ranging from more efficient processes and improved decision support to enhanced analytical capabilities in bank management. At the same time, AI creates environmental, social, and governance-related risks whose effects often become visible only in the medium to long term. Publications by international institutions, such as the OECD, show that AI applications are already being used in several core processes at credit institutions—especially in risk management, fraud prevention, and customer service—even though maturity and breadth of adoption vary significantly by institution. Supervisory authorities are also increasingly using AI, as an IMF paper demonstrates, which may indirectly increase supervisory expectations for institutions.

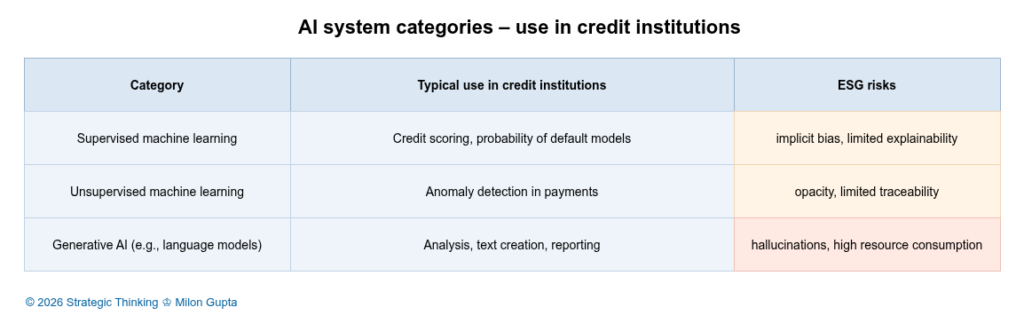

Depending on the area of application, different types of AI systems are used. Machine-learning methods have been an established part of processes in areas such as credit scoring and fraud detection for years. The use of generative AI, by contrast, has so far remained limited, because its use in day-to-day operations still raises difficult technical and regulatory questions.

Figure 1: AI System Categories – Use in Credit Institutions (click image to enlarge)

Behind the umbrella term AI, three categories of AI systems are commonly found in the banking context (see table): supervised machine learning, unsupervised machine learning, and generative AI, which includes large language models. The ESG risks within each category can differ significantly in individual cases, depending on the data basis, the AI model, the use context, and governance.

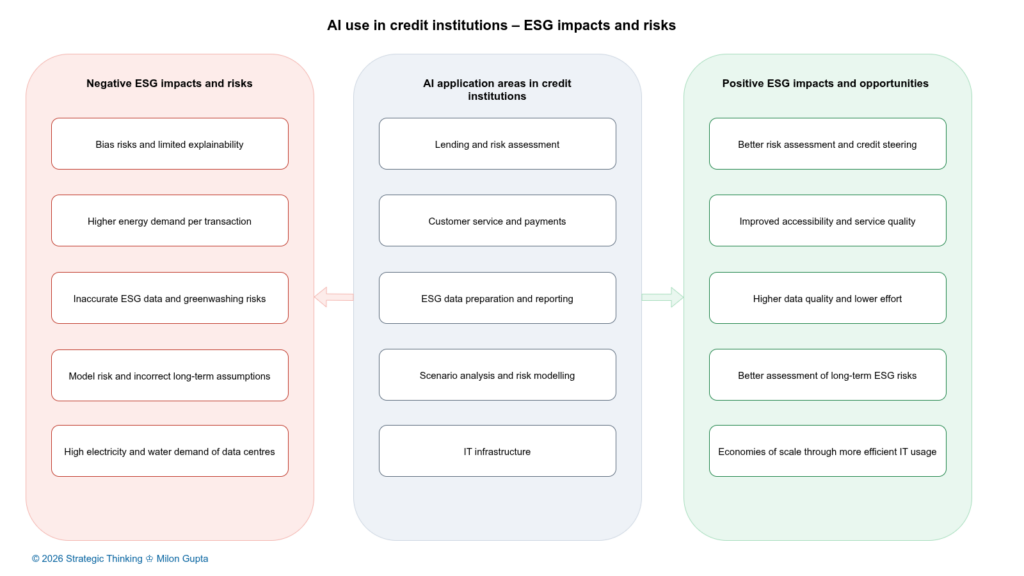

This makes it a central question how credit institutions can use AI systems in such a way that efficiency and management benefits are realized without creating or amplifying environmental burdens, social risks, or governance deficits. This requires a systematic approach that identifies and assesses opportunities and risks along the ESG dimensions and integrates them into existing management and control systems.

ESG Challenges When Using AI in Credit Institutions

The following fields of application do not claim to be exhaustive. However, they provide a realistic insight into the diverse ESG-relevant challenges of AI applications in the financial sector.

Figure 2: AI Use in Credit Institutions – ESG Impacts and Risks (click image to enlarge)

Lending and Risk Assessment

In lending and risk assessment, AI-supported methods are used to automate creditworthiness assessments, differentiate risks more granularly, and make decision processes more efficient. In particular, supervised machine-learning models can achieve higher predictive performance than traditional statistical methods. At the same time, they entail social and governance risks: biases in training data can reinforce discriminatory effects. Complex models often reduce the traceability of individual decisions.

From a regulatory perspective, this area of application is particularly sensitive. The EU AI Act, adopted in 2024, regulates AI systems on a risk-based basis and classifies creditworthiness assessments as high-risk AI systems. These are subject to strict requirements, for example regarding data quality, documentation, transparency, and human oversight.

For AI to contribute overall to more stable decisions in credit processes without coming into conflict with the AI Act, it should be used primarily for decision support. Targeted risk-mitigation measures are required for this. These include clear boundaries of use, model logic that is as traceable as possible, strong validation and monitoring processes, and clearly defined responsibilities.

Customer Service and Payments

In customer service, AI is often used in the form of chatbots and voicebots to automate responses to standard inquiries and enable 24/7 availability. In payments—and especially in anomaly and fraud detection—machine-learning processes are used to recognize patterns in transaction data in near real time.

Potentially significant environmental and social impacts stand alongside the efficiency gains from using AI in customer service. Real-time monitoring increases computational load and therefore energy demand. In the social domain, there is also the risk of indirect exclusion if AI-enabled self-service channels become the standard and alternative access routes effectively lose relevance or are associated with longer waiting times. Data protection and transparency issues are central in this context, because customer data are processed on a large scale. The European Data Protection Board already provided recommendations on this in 2018 in its guidelines on automated decision-making and profiling.

The positive effects of AI become apparent where, in practice, it leads to fewer fraud cases and lower losses. To realize the benefits and minimize the downsides, a mix of measures is sensible: reducing unnecessary compute load, bias checks (e.g., for false positives), clear customer communication, and operational controls that monitor model behavior over time.

ESG Data Preparation and Reporting

In credit institutions, preparing ESG data is often characterized by heterogeneous data sources, data gaps, and inconsistencies. AI can help extract, structure, and plausibility-check unstructured information, for example from sustainability reports of corporate borrowers. This works especially well when clearly defined data models and quality rules exist.

As regulatory reporting and audit pressure increases, governance risks also rise: if data sources, assumptions, extraction logic, and audit trails are not documented, audit and reputational risks may emerge. With generative AI, the risk of hallucinations is added—convincingly worded statements that are entirely fabricated. Without systematic human-in-the-loop review, even a small number of AI-generated factual errors can negate efficiency gains.

For AI to actually generate a net benefit in ESG data preparation, clear rules are needed: pre-vetted and selected sources, traceability of the data chain, defined quality metrics, documented review processes, and clearly regulated roles and responsibilities.

Scenario Analysis and Risk Modelling

AI opens up new possibilities for analyzing complex risk developments. This includes, for example, nonlinear system-dynamic developments in climate scenarios—such as those of the Network for Greening the Financial System (NGFS)—or combined macroeconomic shocks. At the same time, model complexity increases. This makes validation, interpretation, and integration into existing management processes more difficult, including the management of model risk.

Especially for ESG risks with long time horizons, there is a risk of spurious precision: models can produce precise numbers even though the underlying data contain high uncertainty. The NGFS emphasizes that scenarios should be understood as “if–then” logic and not as forecasts with probabilities. The results should therefore always be considered as context-dependent possible developments.

Conclusion: AI-supported scenarios are useful as a complement, not as a substitute for expert judgment. From a governance perspective, uncertainties should be explicitly disclosed, model limits documented, and qualitative classifications systematically embedded.

IT Infrastructure

IT infrastructure forms the basis for all AI applications. Data centers, cloud services, and specialized hardware create environmental burdens through energy and water consumption in ongoing operations as well as through resource demand in hardware production. In its report Energy and AI (April 2025), the International Energy Agency (IEA) notes that AI workloads are a key driver of rising energy demand in data centers.

For credit institutions, two key steering levers follow from this: first, transparency and management of the infrastructure footprint—for example through energy sources, efficiency metrics, and workload optimization; second, consistent governance of relationships with IT service providers and suppliers, including the aspects of data sovereignty and concentration risks with large cloud providers (see ECB guidelines for cloud outsourcing).

Propositions on the Sustainable Use of AI in Credit Institutions

The challenges presented show that ESG-compliant use of AI is a complex steering task. It requires integrating technology, processes, data, employees, and service providers into an ESG-compliant, institution-specific AI strategy. Even though there is no universally applicable AI strategy for all institutions, the following propositions are intended as a stimulus for further discussion.

Proposition 1: ESG-Positive Effects of AI Arise Primarily Through Decision Support, Not Through Full Automation

Where AI supports employees—for example in prioritization, plausibility checks, or pattern recognition—institutions can achieve efficiency gains with appropriate design without unnecessarily increasing governance and social risks.

Proposition 2: Sustainable Use of AI Is Primarily a Governance Issue and Only Secondarily a Technology Issue

Governance primarily determines how sustainably institutions use AI. This includes transparency, clearly defined responsibilities, documented boundaries of use, validation, monitoring, and auditability. These factors determine whether AI remains manageable in day-to-day banking operations. Regulation in the form of the AI Act and the GDPR sets high requirements in this context. Technological implementation is also important for sustainability, but it is only possible on the basis of mature AI governance.

Proposition 3: ESG Risks of AI Arise to a Significant Extent Outside the Institution

A large share of the environmental and social impacts is tied to data centers, data sources, and models of external providers. Accordingly, procurement and outsourcing strategies are of central importance in minimizing externally caused ESG risks in the AI value chain. Institutions’ procurement, outsourcing, and digital strategies should therefore systematically integrate ESG aspects of AI. As BaFin emphasizes, transparency is the key to the safe use of third-party AI services.

Conclusion

Expanding the use of AI offers credit institutions significant opportunities to make processes more efficient and to achieve a more precise level of management and control. At the same time, ESG risks arise that, without robust governance, conscious model use, and transparent infrastructure decisions, can lead to negative environmental and social impacts. Sustainable use of AI therefore does not mean maximum use wherever technically possible, but rather targeted and controlled integration into existing risk and management systems.

Next Steps

The use of AI in the banking environment increasingly raises questions that go beyond technology and efficiency: How can ESG goals, regulatory requirements, and economic expectations be reconciled? Which use cases deliver sustainable added value? And where do new ESG and model risks emerge that should be systematically taken into account in planning, including ESG risk planning?

If you would like to share your perspective or discuss practical experience, I would be pleased to exchange views. If you wish, I would also be happy to offer an initial, non-binding conversation on how I could support you in developing an ESG-compliant AI strategy. Please write to me.